Update README.md

Browse files

README.md

CHANGED

|

@@ -76,9 +76,7 @@ with torch.inference_mode():

|

|

| 76 |

|

| 77 |

### Results

|

| 78 |

|

| 79 |

-

|

| 80 |

-

|

| 81 |

-

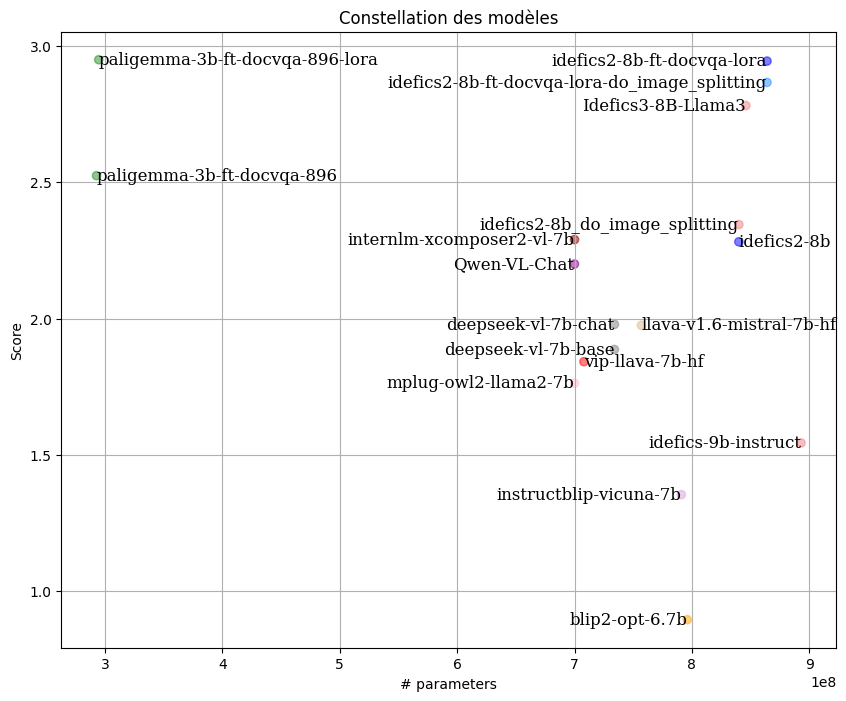

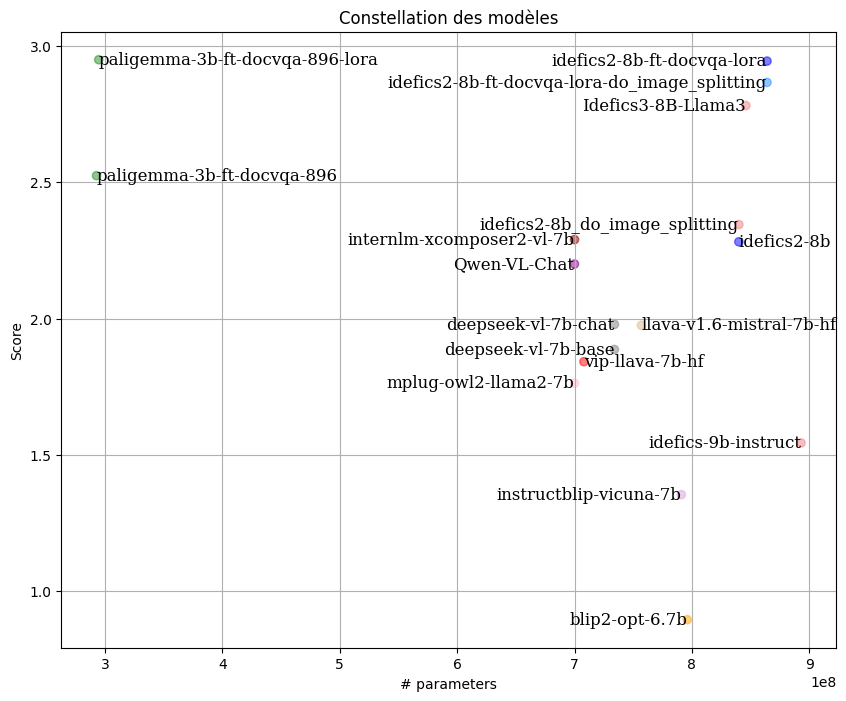

By following the LLM-as-Juries evaluation method, the following results were obtained using three judge models (GPT-4o, Gemini1.5 Pro, and Claude 3.5-Sonnet). These models were evaluated based on a well-defined scoring rubric specifically designed for the VQA (Visual Question Answering) context, with clear criteria for each score to ensure the highest possible precision in meeting expectations.

|

| 82 |

|

| 83 |

|

| 84 |

|

|

|

|

| 76 |

|

| 77 |

### Results

|

| 78 |

|

| 79 |

+

By following the LLM-as-Juries evaluation method, the following results were obtained using three judge models (GPT-4o, Gemini1.5 Pro, and Claude 3.5-Sonnet). These models were evaluated based on a well-defined scoring rubric specifically designed for the VQA context, with clear criteria for each score to ensure the highest possible precision in meeting expectations.

|

|

|

|

|

|

|

| 80 |

|

| 81 |

|

| 82 |

|